WTF is AI actually doing?

Contents

Intro

AI can really feel like magic. It can surprise us in how both “clever” and “stupid” it can be. But it’s not magic, it’s just mathematics init™.

Forming your own mental model of how Large Language Model - the AI model behind tools like ChatGPT and Claude work helps you use them well. I like to think of LLMs as four ingredients combined, and this mental model helps me anywhere from prompting ChatGPT to setting up Claude Code for agentic engineering - and I hope it helps Yous: plural of 'you'. English lost this after we stopped saying 'thou'; Irish English apparently re-introduced a plural for us too.

Let’s think about what happens when we ask ChatGPT about the weather: it “thinks” 🧠, calls a weather API 🛠️, gives a helpful answer 💬, word by word 🎱.

I’ve simplified things here - if you spot anything misleading, please drop me a message.

🎱 1. GPT: Predict the next word

GPT stands for Generative Pre-trained Transformer. That sounds a bit technical and the name doesn’t matter much, but there’s a reason it’s called “generative AI” - it’s generating words. Or rather tokens. A token is roughly ¾ of a word. I’ll say “words” and “tokens” interchangeably but technically they’re tokens.

You give it the start of a sentence, or a prompt, and it predicts the next word - over and over until it thinks that it’s done. That’s it, that’s the whole thing. But from that, we can do some very useful things.

Notice that last prediction? <EOS> is a special end-of-sequence token that tells the model to stop generating.

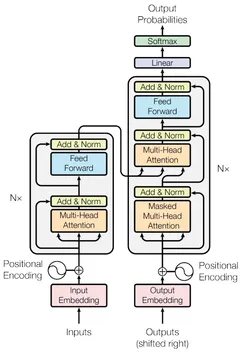

The architecture that made this work is the Transformer (the T in GPT), introduced in Google’s 2017 paper Attention is All You Need  The Transformer - Vaswani et al. (2017)

Open link ↗

.

The Transformer - Vaswani et al. (2017)

Open link ↗

.

What makes it work so well is a “self-attention” mechanism - it’s learnt what words mean in context. The word “bank” next to “river” means something completely different to “bank” next to “money”.

Click “bank” to see what it means here

💬 2. Predict an assistant response

Let’s put the chat in ChatGPT. We’ve made a text prediction machine, but it’s not that useful yet. The base model was trained on loads of long bits of text so it’s eager to keep writing. This means that when you ask it a question it just keeps going, beyond a useful answer - or it might generate more questions instead of an answer.

GPT before instruction tuning. Trained to predict the next word in text, so it just continues whatever you give it - it doesn't understand questions, instructions, or conversations.

Try the middle tab above 🎱 Chat formatting. That was the original hack: slap “User:” and “Assistant:” labels on your prompt and hope the base model gets the hint. It sort of works, but without stop sequences the model generates both sides of the conversation. It doesn’t know when to shut up.

So the model creators take the base model and then apply instruction tuning by providing examples of chats between a user and assistant and then the LLM learns to predict responses that are useful to the user and to stop generating at an appropriate time (using that special A special token in the model's vocabulary that signals 'I'm done generating' ).

🧠 3. Predict thought and reflection

Even after instruction tuning, these models can still be surprisingly stupid and bullshit confidently. Maths problems, multi-step logic, anything a human would need to think about - the models try to predict an answer in one-shot and can often get it wrong if the answer doesn’t already appear in the training data.

One fix is to let the model “think” before it answers. The idea of thinking step by step was first shown as a prompting technique - you ask the model to reason through a problem. This is still the same next-word prediction, but now it’s predicting a thinking process rather than jumping straight to a conclusion.

Modern reasoning models do this automatically. Depending on the The application around the model - ChatGPT's web UI, Claude Code's TUI, etc. Handles conversation format, tool execution, and displaying results you’re using, you can see the model What you see is typically a summary of the reasoning, not the raw internal chain-of-thought. Providers often treat the full thinking traces as proprietary about a problem before answering.

Sarah has 3 times as many apples as Tom. Together they have 24 apples. How many apples does Sarah have?

Sarah has 3 times as many apples as Tom. Together they have 24 apples. How many apples does Sarah have?

Let me define variables: let Tom have x apples, then Sarah has 3x.

Together they have: x + 3x = 24, so 4x = 24.

Therefore x = 6, meaning Tom has 6 apples.

Sarah has 3 × 6 = 18 apples.

Check: 6 + 18 = 24. ✓

Select an example and click Run comparison to see reasoning in action.

This extends beyond maths. Planning, debugging, coding - anything a human would think about before acting, the models benefit from thinking about too.

🛠️ 4. Predict taking actions

The models don’t know what the weather is. They have no internet access, no clock, no ability to run code. They’re still just a token prediction machine. But the tokens don’t have to be prose, we can have it predict structured actions that the The application around the model - ChatGPT's web UI, Claude Code's TUI, etc. Handles conversation format, tool execution, and displaying results can interpret.

Here’s the trick: you tell the model in its system prompt what Capabilities given to the model by the app running it - things like searching the web, doing maths, or reading files. 'Function calling' and 'tool use' are used interchangeably it has available. The model predicts a structured tool call (e.g. get_weather("London")). Something outside the model executes that call, gets the result, and feeds it back in. The model then predicts a response using the real data.

This is exactly what’s happening in the weather GIF at the top. All four ingredients are there: reasoning mode produces the thinking bubble, tool calling hits the weather API behind the scenes, and the response is next-word prediction using the real data.

Click Next Step to begin…

Click Next Step to begin…

The bash tool in Claude Code is a Swiss army knife. Instead of building a specific tool for every task, you just give the model a shell. It can run any command, read any file, install packages. That’s why Claude Code feels so capable - it’s a powerful model equipped with decades of tooling built with the Each tool does one thing well, designed to be combined together. A model with a shell inherits all of them .

Those are the four ingredients. But there are a couple of things worth knowing about how these models work day-to-day.

Knowledge cutoff

The model was trained on data up to a certain date. Ask it about anything after that and it either doesn’t know or, worse, Makes shit up: Models sometimes confidently state plausible but incorrect things for things they weren't trained on .

Ask a model with a 2024 cutoff and no internet access who the We've had six since 2016 - Cameron, May, Johnson, Truss, Sunak, Starmer. The models can barely keep up is in February 2026 and it’ll confidently get it wrong  ChatGPT with a 2024 cutoff, asked in Feb 2026

Open link ↗

.

ChatGPT with a 2024 cutoff, asked in Feb 2026

Open link ↗

.

This is why web search is such a useful tool: models can stay up to date without pre-training a new model - which takes a lot of time and compute 💸

Context window

Everything we’ve talked about: your input/prompts, the model’s reasoning, tool calls and their results, the final output - it all lives in a fixed-size workspace called the context window.

Modern models have large windows (Claude typically has 200k tokens, roughly 150,000 words). But they’re not infinite. Fill it with irrelevant stuff - a long conversation from an unrelated topic, or thousands of lines of code you won’t actually use - and you dilute the model’s attention.

Click Run Round 1 to start the visualisation.

Context management and The evolution of prompt engineering - rather than just crafting the right words, it's about curating all the information available to the model at each turn: system instructions, tools, data, message history. Context is a critical but finite resource are important skills for working well with LLMs and agents. If you’re not thinking about context and you’re getting bad results, that might be why. You ought to be deliberate about what goes in the context. Techniques like Separate model conversations spawned to handle subtasks - their context is isolated from the main conversation, which gets back a summary of the results help minimise Useful context getting diluted by irrelevant tokens as the window fills up - more about that another time.

Compaction: most The application around the model - ChatGPT's web UI, Claude Code's TUI, etc. Handles conversation format, tool execution, and displaying results automatically summarise the conversation when it gets towards the limit of the context. This can work well, but important details for the current task may get lost. If something seems off after a long session, starting fresh often helps.

LLMs have amnesia

Between conversations, the model remembers nothing. No persistent memory. Every conversation starts from a blank slate.

The memory features in ChatGPT, Claude, and others are just automated context management - they retrieve relevant summaries and inject them into your next conversation’s context window.

For coding agents, the equivalent is markdown files. CLAUDE.md or AGENTS.md, these get injected into context at the start of every session. The model’s “memory” of your project is just a file it reads. Which means you can edit it, version control it, and reason about exactly what the model knows when it starts each conversation.

Claude Code now has auto memory MEMORY.md which tries to automatically manage your memory, read more about it here.

Summary

ChatGPT, Claude, Gemini - they’re all the same four ingredients:

- 🎱 Next-word prediction trained on massive text data

- 💬 Instruction tuning to make it helpful and conversational

- 🧠 Reasoning to think before answering

- 🛠 Tool calling to interact with the real world

LLMs are just very capable text predictors, trained to be helpful, with thinking time and tools bolted on. Understanding this helps you use these models better - you’ll recognise why they fail, how to prompt them effectively, and what your coding agents are actually doing.